Information Literacy Instruction for the Post-Truth Era

The 2016 presidential election marked a watershed moment in how society engages with information, ushering in what many scholars describe as a “post-truth era” characterized by widespread misinformation, disinformation, and a fundamental erosion of institutional trust (1). In this environment, where fabricated content spreads rapidly across digital platforms and the boundaries between authentic and synthetic media blur, information literacy has evolved from an academic skill to a critical survival competency. Educators now face the urgent challenge of preparing students to navigate a digital landscape where deepfakes, algorithmic manipulation, and coordinated disinformation campaigns threaten democratic institutions and individual decision-making.

The Post-Truth Challenge in Education

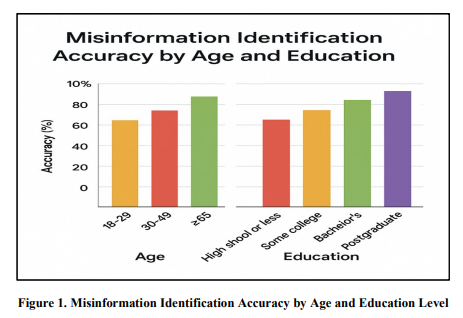

The post-truth phenomenon goes beyond the mere prevalence of false information; it reflects a systematic undermining of shared epistemic standards and of objective truth itself. Research demonstrates that misinformation spreads rapidly through algorithm-driven platforms, exploiting cognitive biases that reinforce erroneous beliefs (2). Studies have found that most students, from middle school through college, struggle to distinguish between credible and unreliable news articles, and that adults face similar challenges.

https://journalcra.com/sites/default/files/issue-pdf/49053.pdf

Librarians and information literacy educators have long recognized the importance of critical evaluation skills, but the scale and sophistication of contemporary information manipulation demand new pedagogical approaches (1, 3). Echo chambers, algorithmic curation, and viral communication patterns create polarization and fragment shared understanding of reality (4).

Understanding and Teaching Deepfake Detection

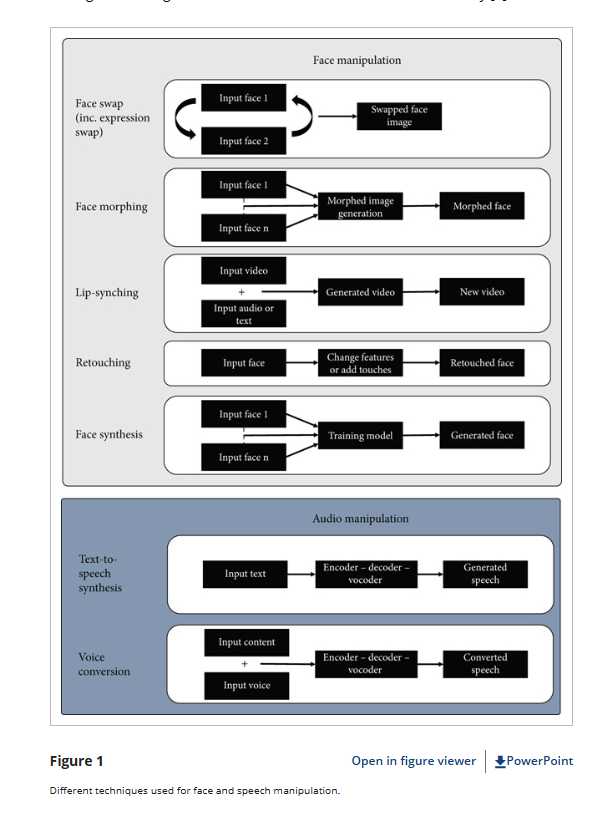

Deepfakes—AI-generated synthetic media that manipulate visual and audio content—pose one of the most pressing threats to information authenticity. The scale of this threat has exploded: deepfake files surged from 500,000 in 2023 to a projected 8 million in 2025, with an annual growth rate nearing 900% (5). In the first quarter of 2025 alone, there were 179 deepfake incidents, surpassing the total for all of 2024 by 19% (5). The financial impact is staggering—losses in North America exceeded $200 million in just the first quarter of 2025 due to deepfake fraud (5).

Recent high-profile incidents illustrate the breadth of deepfake threats. In January 2026, within the first week of the new year, deepfakes appeared immediately following major news events: Trump’s Venezuela operation spurred widespread AI-generated images across social media, while an ICE officer shooting incident led to the circulation of fake, AI-edited images that many mistook for genuine documentation (6). Multiple deepfake videos of Elon Musk circulated across YouTube and X in 2025, promoting fraudulent cryptocurrency giveaways that cost victims thousands of dollars (7). During the 2025 Philippine elections, synthetic media spread misinformation, influencing public perception and decision-making (8).

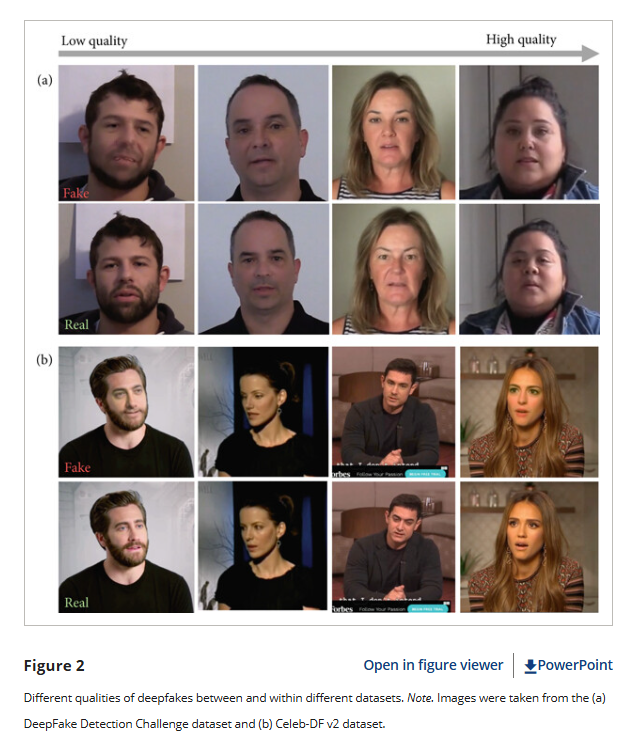

Teaching students to identify deepfakes requires both technical knowledge and critical skepticism. Detection involves looking for inconsistencies in facial movements, unnatural skin texture, abnormal blinking patterns, voice synthesis artifacts, and lip-sync discrepancies (9). However, educators must acknowledge a sobering reality: as generative AI technology advances, manual detection becomes increasingly difficult. Recent research reveals that interventions like raising awareness about deepfakes or

https://onlinelibrary.wiley.com/doi/10.1155/hbe2/1833228

providing written detection tips have proven largely ineffective at improving detection rates (10). Even more concerning, experts warn that by 2026, deepfakes will move toward real-time synthesis, capable of producing videos that closely resemble human nuances, making them harder to detect (11). As one NBC News report from January 2026 noted, “In terms of just looking at an image or a video, it will essentially become impossible to detect if it’s fake” (6).

More promising approaches focus on developing broader media literacy competencies rather than checklist-based detection strategies. The News Literacy Project’s “Rumor Guard 5 Factors” and similar frameworks encourage students to evaluate source credibility, cross-reference claims, and consider the context and motivation behind content creation (12). Verification tools, including reverse image searches, fact-checking websites like Snopes and FactCheck.org, and AI-detection software such as Microsoft’s Video Authenticator, provide students with practical resources for authentication (13).

Recent benchmark evaluations underscore both the challenge and necessity of hybrid approaches. The DeepFake-Eval-2024 benchmark demonstrates that academic detection models often suffer performance declines of up to 50% when confronted with real-world deepfakes. In comparison, even the best commercial video detectors achieve only approximately 78% accuracy—still below the estimated 90%

Addressing Algorithmic Bias in Educational Contexts

Algorithmic bias presents another critical dimension of information literacy in the post-truth era. Educational AI systems—from admissions algorithms to learning management platforms—can perpetuate and amplify societal inequities when trained on biased historical data. Research demonstrates that removing race data from college admissions algorithms reduces diversity without improving academic merit, highlighting how seemingly neutral technical solutions may produce discriminatory outcomes (15). Recent developments underscore the urgency of addressing these issues: in May 2025, a federal judge allowed a collective action lawsuit to proceed against Workday’s AI-based applicant screening system, which allegedly discriminated against job seekers based on age, race, and disability (16).

The manifestations of algorithmic bias extend across multiple dimensions. A study published in August 2025 using major AI tools revealed that images featuring braids and

https://onlinelibrary.wiley.com/doi/10.1155/hbe2/1833228

natural Black hairstyles received significantly lower “intelligence” and “professionalism” scores—biases rarely observed with images of white women, demonstrating how AI systems can encode and perpetuate harmful cultural stereotypes (16). In 2025, researchers at the University of Melbourne discovered that AI-powered hiring tools struggled to accurately evaluate candidates with speech disabilities or heavy non-native accents, revealing ableist biases embedded in employment screening technologies (17). Additionally, a 2025 study by the Anti-Defamation League found that several major large language models, including ChatGPT, Llama, Claude, and Gemini, exhibited various forms of bias in their outputs (18).

Teaching about algorithmic bias requires helping students understand both technical and social dimensions of automated systems. Effective pedagogical strategies include hands-on activities in which students create their own simple classifiers, enabling them to experience firsthand how selecting training data influences algorithmic behavior (19). Research from middle school implementations shows that when students engage in data collection, curation, and model training, they develop a deeper understanding of how biases enter AI systems and produce differential impacts across demographic groups (20).

Additionally, educators should introduce students to fairness metrics and evaluation frameworks that assess algorithmic outputs for disparate impacts. Common mitigation strategies include adjusting sample weights, employing adversarial learning techniques, and conducting regular algorithmic audits (15). The regulatory landscape is evolving rapidly to address these concerns: Colorado legislation set to take effect in February 2026 requires deployers of “high-risk” AI systems to implement safeguards against discrimination (21). However, scholars emphasize that focusing exclusively on technical solutions proves insufficient; addressing algorithmic bias requires institutional accountability, diverse development teams, and ongoing engagement with affected communities (22).

Comprehensive Strategies for Information Literacy Instruction

Developing robust information literacy for the post-truth era demands multi-faceted instructional approaches that integrate critical thinking, digital citizenship, and ethical awareness. The challenge has intensified significantly in 2026: as Instagram head Adam Mosseri noted in January 2026, “For most of my life, I could safely assume that the vast majority of photographs or videos that I see are largely accurate captures of moments that happened in real life. This is clearly no longer the case” (6). This collapse of  default trust in visual media represents a fundamental shift requiring new educational paradigms.

default trust in visual media represents a fundamental shift requiring new educational paradigms.

The Digital Education Outlook 2023 emphasizes that transparency in AI systems can reduce bias by approximately 30%, suggesting that demystifying algorithmic processes constitutes an important pedagogical goal (23). However, transparency alone is insufficient when facing what UNESCO researchers describe as a “crisis of knowing itself”—where deepfakes don’t just introduce falsehoods but erode the very mechanisms by which societies construct shared understanding (24).

Effective instruction should emphasize context-specific evaluation over universal checklists. Rather than teaching students to mechanically apply the CRAAP test (Currency, Relevance, Authority, Accuracy, Purpose), educators should foster what scholars call “critical information literacy”—an approach that recognizes how power, privilege, and social position shape information production and validation (4). This involves teaching students to interrogate their own positionality, understand knowledge systems and processes, and develop epistemic virtues including intellectual humility and openness to evidence.

Libraries play a crucial, transformative role in this educational mission. Through continuous Media and Information Literacy initiatives, libraries can serve as active centers for digital education and community engagement, equipping the public with critical tools to assess the authenticity of information (2). These initiatives should extend beyond academic settings to reach diverse populations, recognizing that information literacy challenges affect all community members regardless of age or educational background.

Recent pedagogical research highlights the importance of integrating both sociocultural and cognitive approaches to literacy instruction. A 2025 study examining climate change education in rural high schools found that combining media literacy frameworks with epistemic cognition theory produced substantial learning gains, helping students understand not just how to evaluate sources but how to navigate conflicting ways of knowing (1). This dual emphasis on external evaluation and internal reasoning processes appears particularly effective for addressing polarized topics where motivated reasoning and confirmation bias present significant obstacles.

Moving Forward: From Awareness to Equity

The ultimate goal of information literacy instruction in the post-truth era extends beyond individual skill development to promoting broader educational equity and democratic participation. As algorithmic systems increasingly mediate access to information, educational opportunities, and civic engagement, ensuring that all students possess sophisticated digital literacy becomes a matter of social justice. Policymakers must ensure that privacy regulations do not inadvertently prevent researchers from identifying and addressing algorithmic bias (25). At the same time, curriculum designers should create authentic learning environments where students practice collaborative fact-checking and develop sustainable habits of critical engagement. In an era where distinguishing truth from manipulation grows increasingly difficult, information literacy represents not merely an academic competency but a fundamental prerequisite for informed citizenship.

References

-

- Lathrop, B. N. (2025). Confronting the post-truth phenomenon in literacy education: The need for a critical media epistemology. Journal of Adolescent & Adult Literacy, 68(6), 665-678. https://ila.onlinelibrary.wiley.com/doi/full/10.1002/jaal.1407

- International Journal of Current Research. (2025). Digital literacy vs. misinformation: The critical role of libraries in the post-truth era. International Journal of Current Research. https://www.journalcra.com/article/digital-literacy-vs-misinformation-critical-role-libraries-post-truth-era

- Cooke, N. A. (2017). Fake News and Alternative Facts: Information Literacy in a Post-Truth Era. ALA Editions. https://alastore.ala.org/content/fake-news-and-alternative-facts-information-literacy-post-truth-era

- Ruslan. (2022). Critical information literacy in the post-truth era: A strategy for facing information flow in Indonesia. ADABIYA, 24(1). https://www.researchgate.net/publication/358888829_Critical_Information_Literacy_in_the_Post-Truth_Era_A_Strategy_for_Facing_Information_Flow_in_Indonesia

- Keepnet. (2025, November 13). Deepfake statistics & trends 2025: Key data & insights. https://keepnetlabs.com/blog/deepfake-statistics-and-trends

- NBC News. (2026, January 9). AI is intensifying a ‘collapse’ of trust online, experts say. https://www.nbcnews.com/tech/tech-news/experts-warn-collapse-trust-online-ai-deepfakes-venezuela-rcna252472

- Norton. (2025, October 27). Top 5 ways scammers have used AI and deepfakes in 2025. https://us.norton.com/blog/online-scams/top-5-ai-and-deepfakes-2025

- Cyble. (2025, December 11). Deepfake-as-a-Service exploded in 2025: 2026 threats ahead. https://cyble.com/knowledge-hub/deepfake-as-a-service-exploded-in-2025/

- AI for Education. (2024, October 31). Uncovering deepfakes: Classroom guide. https://www.aiforeducation.io/ai-resources/uncovering-deepfakes

- Somoray. (2025). Human performance in deepfake detection: A systematic review. Human Behavior and Emerging Technologies. https://onlinelibrary.wiley.com/doi/10.1155/hbe2/1833228

- University at Buffalo. (2026, January). Deepfakes leveled up in 2025: Here’s what’s coming next. UBNow. https://www.buffalo.edu/ubnow/stories/2026/01/lyu-conversation-deep-fakes-2026.html

- Silva, D. (2024, August 19). Teaching students about deepfakes & modified images. TeachersFirst Blog. https://teachersfirst.com/blog/2024/08/teaching-students-about-deepfakes-modified-images/

- N.C. Cooperative Extension. (2025, March). Digital literacy for the age of deepfakes: Recognizing misinformation in AI-generated media. https://bertie.ces.ncsu.edu/2025/03/digital-literacy-for-the-age-of-deepfakes-recognizing-misinformation-in-ai-generated-media/

- Chandra, et al. (2025, March 4). DeepFake-Eval-2024 benchmark. EmergentMind. https://www.emergentmind.com/topics/deepfake-eval-2024

- World Journal of Advanced Research and Reviews. (2025). Algorithmic bias in educational systems: Examining the impact of AI-driven decision making in modern education. World Journal of Advanced Research and Reviews, 25(01), 2012-2017. https://journalwjarr.com/sites/default/files/fulltext_pdf/WJARR-2025-0253.pdf

- Crescendo. (2026, January 8). AI bias: 16 real AI bias examples & mitigation guide. https://www.crescendo.ai/blog/ai-bias-examples-mitigation-guide

- AIM Multiple. (2025). Bias in AI: Examples and 6 ways to fix it in 2026. https://research.aimultiple.com/ai-bias/

- Wikipedia. (2026, January 24). Algorithmic bias. https://en.wikipedia.org/wiki/Algorithmic_bias

- Vartiainen, H., Kahila, J., Tedre, M., López-Pernas, S., & Pope, N. (2025). Enhancing children’s understanding of algorithmic biases in and with text-to-image generative AI. https://journals.sagepub.com/doi/10.1177/14614448241252820

- ScienceDirect. (2025, May 23). Teaching AI awareness in middle school classrooms: Design, implementation and evaluation of two education modules on algorithmic bias and filter bubbles. https://www.sciencedirect.com/science/article/pii/S2666920X25000657

- Cornell Journal of Law and Public Policy. (2025, November 21). DEI for AI: Is there a policy solution to algorithmic bias? https://publications.lawschool.cornell.edu/jlpp/2025/11/21/dei-for-ai-is-there-a-policy-solution-to-algorithmic-bias/

- ResearchGate. (2025, January 31). Algorithmic bias in educational systems: Examining the impact of AI-driven decision making in modern education. https://www.researchgate.net/publication/388563395_Algorithmic_bias_in_educational_systems_Examining_the_impact_of_AI-driven_decision_making_in_modern_education

- Schiller International University. (2025, June 23). Risks of AI algorithmic bias in higher education. https://www.schiller.edu/blog/risks-of-ai-algorithmic-bias-in-higher-education/

- UNESCO. (2025, October 27). Deepfakes and the crisis of knowing. https://www.unesco.org/en/articles/deepfakes-and-crisis-knowing

- OECD. (2023). Algorithmic bias: The state of the situation and policy recommendations. OECD Digital Education Outlook 2023. https://www.oecd.org/en/publications/oecd-digital-education-outlook-2023_c74f03de-en/full-report/algorithmic-bias-the-state-of-the-situation-and-policy-recommendations_a0b7cec1.html

- Kurbanoğlu, S., Špiranec, S., Ünal, Y., Boustany, J., & Kos, D. (Eds.). (2022). Information Literacy in a Post-Truth Era: ECIL 2021. Springer. https://www.academia.edu/119850062/Information_Literacy_in_a_Post_Truth_Era

- Library Quarterly. (2017). Posttruth, truthiness, and alternative facts: Information behavior and critical information consumption for a new age. The Library Quarterly, 87(3). https://www.journals.uchicago.edu/doi/abs/10.1086/692298