Artificial Intelligence: A Brief Overview

AI has already influenced our lives. We might not think of it all that often, but every time you use your smartphone, smart TV, streaming device, computer, smartwatch, etc you are interacting with AI. It’s everywhere, much like Dick Orkin’s Chicken Man superhero character. We’ve come to rely on it for making our lives easier. 20 years ago, if you wanted to travel somewhere unfamiliar, you had to have a physical map, a print-out of map-quest instructions (there and back), or someone who knew how to get there. In my case, my dad usually wrote down directions, and my mom would tell me which landmarks to look for. Now, I have an app on my phone that tells me where to go. It’s changed and improved in the past 10 years to inform me of where speed traps are, stoplights, and if there’s heavy traffic. This is only one example of thousands which have come into existence in the past few decades. We won’t be delving into all of those examples since there are far too many, rather let’s look back at when AI started and see where its going.

In the early 1950s Alan Turing was one of many scientists intrigued by the what-ifs of computers. He wrote an article about it as well. Back then, computers could not remember commands, and they were costly to use. The idea of computers doing more, being more, was catching fire in the scientific and technology communities. This led to a conference where, according to Harvard Universities Science in the News Blog, “it initialized the proof of concept through Allen Newell, Cliff Shaw, and Herbert Simon’s, Logic Theorist. The Logic Theorist was a program designed to mimic the problem-solving skills of a human and was funded by Research and Development (RAND) Corporation. It’s considered… to be the first artificial intelligence program and was presented at the Dartmouth Summer Research Project on Artificial Intelligence (DSRPAI) hosted by John McCarthy and Marvin Minsky in 1956… McCarthy, imagining a great collaborative effort, brought together top researchers from various fields for an open-ended discussion on artificial intelligence, the term which he coined…” From that conference sprang the possibilities for what AI has become.

Between the 1950s and 1970s, AI development was a major focus of the technological and military research communities. Its capability seemed endless, which made, and still makes, it very useful for military functions. DARPA began funding research projects in the 1970’s with the prompting of Marvin Minsky who “told Life Magazine, ‘from three to eight years we will have a machine with the general intelligence of an average human being.’” It was an ambitious claim, which ultimately was unfruitful for several years. Computers needed several more advancements before they would be useful for anything besides calculations. The 1980’s brought many changes, one of which was major improvements and advancements in technology. The re-dawning of AI was upon us.

Funding was more available, which spawned research projects across the globe.

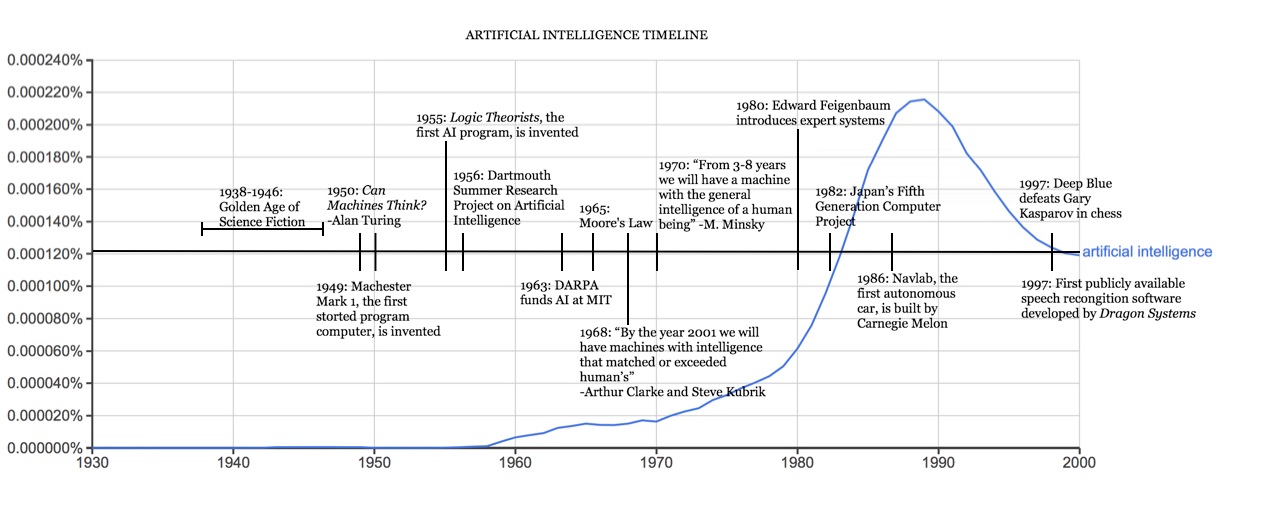

According to Harvard Universities Science in the News Blog, “John Hopfield and David Rumelhart popularized “deep learning” techniques which allowed computers to learn using experience… Edward Feigenbaum introduced expert systems which mimicked the decision making process of a human expert. The program would ask an expert…how to respond…and once this was learned…non-experts could receive advice from that program. Expert systems were widely used in industries. The Japanese government heavily funded expert systems and other AI related endeavors as part of their Fifth Generation Computer Project (FGCP). These projects didn’t yield the results desired necessarily, but they may have inspired others to pursue independent projects. Below is an image from Harvard’s collection illustrating the timeline of AI development.

history of artificial intelligence

The 1990’s and 2000’s saw an influx of AI development. Computer systems that could anticipate and react to your needs were becoming a reality, and consumers were eager to purchase better technology. According to AI Multiple, “There has been a 14x increase in the number of active AI startups since 2000. Thanks to recent advances in deep-learning, AI is already powering search engines, online translators, virtual assistants, and numerous marketing and sales decisions.” The possibilities are truly endless and almost every company is finding ways to make AI work for them.

From smarter search engines to apps that track your health in detail and cell phones that create wifi hotspots so you can work remotely anywhere, AI has changed our lives. It’s improving processes, systems, and user satisfaction across every industry. The future of everything is changing with each new development. This is AI, and this is just the beginning…

By Gretchen Hendrick Gardella, MLIS

Gretchen Hendrick Gardella is a Librarian with administrative, research, and vast technical skills. Ms. Gardella brings over 16 years of experience working in academic and public libraries to the discussion.